Nickel alloys are the backbone of high-performance industrial systems operating in extreme environments, from chemical processing reactors to aerospace turbine components and marine offshore infrastructure. Their unique combination of high-temperature strength, exceptional corrosion resistance, and metallurgical stability makes them irreplaceable in applications where carbon steels and stainless steels fail prematurely. When you buy nickel alloy materials, the primary determinant of long-term operational reliability is not just nominal alloy grade, but the verified metallurgical and performance properties that align with your specific operating conditions.

Contents

Hide

Key Metallurgical Properties That Define Nickel Alloy Material Performance

The functional performance of nickel alloys is rooted in their chemical composition and resulting microstructure, which dictate both mechanical behavior and corrosion resistance. Unlike carbon steels, which rely on carbon content for strength, nickel alloys use a carefully balanced blend of alloying elements to achieve targeted properties. Chromium, for example, forms a stable passive chromium oxide layer to resist oxidizing environments and high-temperature scaling, while molybdenum and tungsten enhance resistance to localized pitting and crevice corrosion in chloride-rich media. Elements like niobium and titanium stabilize carbon to prevent intergranular sensitization during welding, while copper improves performance in reducing acids such as sulfuric and hydrofluoric acid.

Chemical Composition & Performance Correlation for Common Nickel Alloy Grades

Even within the same nominal alloy grade, minor variations in alloying element content can lead to dramatic differences in real-world performance. For example, a 0.5 wt% reduction in molybdenum content in Alloy 625 can lower its Critical Pitting Temperature (CPT) by up to 25°C, significantly reducing its resistance to localized corrosion in seawater or acidic chloride environments. Similarly, excess carbon content above 0.01 wt% in low-carbon nickel grades can lead to chromium carbide precipitation along grain boundaries during welding, a phenomenon known as sensitization, which renders the material susceptible to intergranular corrosion in service.

| Alloy Grade | Nominal Nickel Content (wt%) | Key Alloying Elements (wt%) | Critical Pitting Temperature (CPT, °C) | Ultimate Tensile Strength (MPa, Annealed) | Primary Corrosion Resistance Focus |

|---|---|---|---|---|---|

| Alloy 400 | 63-70 | Cu: 28-34, Fe: ≤2.5 | 0-5 | 485-585 | Reducing acids, hydrofluoric acid |

| Alloy 600 | 72 minimum | Cr: 14-17, Fe: 6-10 | 10-15 | 550-690 | High-temperature oxidation, caustic environments |

| Alloy 825 | 38-46 | Cr: 19.5-23.5, Mo: 2.5-3.5, Cu: 1.5-3.0 | 35-45 | 620-760 | Sulfuric acid, moderate chloride environments |

| Alloy 625 | 58 minimum | Cr: 20-23, Mo: 8-10, Nb: 3.15-4.15 | ≥110 | 760-900 | Seawater, pitting/crevice corrosion, high-temperature strength |

| Alloy C276 | 57 minimum | Cr: 14.5-16.5, Mo: 15-17, W: 3-4.5 | ≥115 | 740-890 | Severe reducing/oxidizing environments, universal corrosion resistance |

Mechanical & Thermal Performance Validation for Nickel Alloy Materials

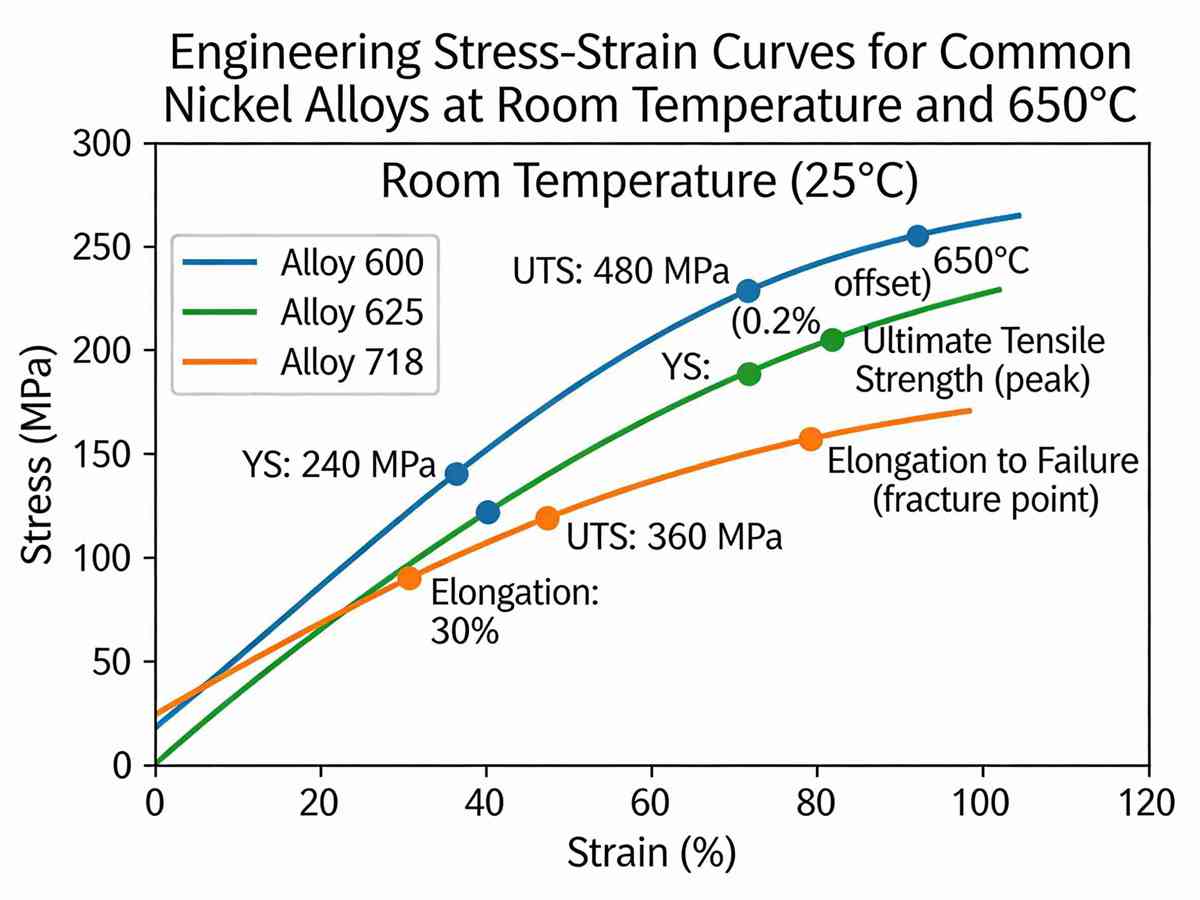

Beyond chemical composition, the mechanical properties of nickel alloy materials are critical for applications involving structural loading, high pressure, or elevated temperatures. When evaluating material suitability, engineers must verify not just room-temperature tensile strength, but also elevated-temperature creep strength, fatigue resistance, and impact toughness, particularly for cryogenic or high-stress cyclic applications.For example, precipitation-hardened nickel alloys such as Alloy 718 deliver ultimate tensile strengths exceeding 1250 MPa after proper aging heat treatment, making them ideal for aerospace turbine discs and downhole oil and gas tools operating at temperatures up to 650°C. In contrast, solution-annealed austenitic nickel alloys like Alloy C276 offer superior impact toughness at cryogenic temperatures as low as -196°C, with no ductile-to-brittle transition, making them suitable for liquid natural gas (LNG) processing equipment.Heat treatment is the single most influential factor in controlling these mechanical properties. Improper solution annealing temperatures, insufficient hold times, or slow cooling rates can lead to the formation of brittle intermetallic phases such as sigma phase, which can reduce impact toughness by up to 70% and increase susceptibility to corrosion fatigue failure. For most corrosion-resistant nickel alloys, rapid quenching from the solution annealing temperature is required to retain alloying elements in solid solution and prevent detrimental phase precipitation.

Corrosion Resistance Testing Protocols for Nickel Alloy Materials

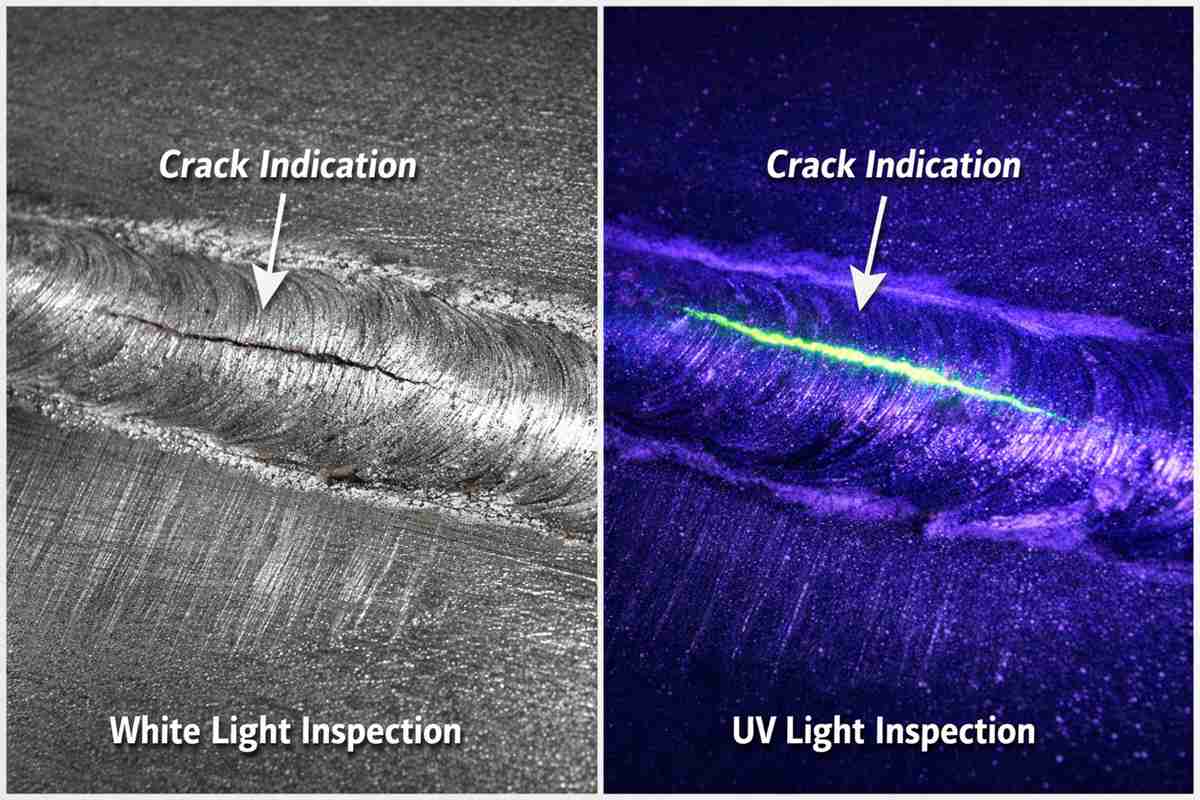

The most common cause of nickel alloy component failure is premature corrosion, which can occur even in nominally suitable environments if the material does not meet specified corrosion resistance criteria. When you buy nickel alloy materials, validating corrosion performance through standardized testing is critical to mitigating in-service failure risk.Critical Pitting Temperature (CPT) testing per ASTM G48 Method C is the industry standard for evaluating localized pitting resistance in chloride-rich environments, measuring the minimum temperature at which pitting initiates in a 6% ferric chloride solution. For offshore and marine applications, a minimum CPT of 80°C is typically required, while severe chemical processing applications may demand CPT values exceeding 100°C.For applications involving welding, intergranular corrosion testing per ASTM A262 is essential to verify that the material is not susceptible to sensitization. The Strauss test (ASTM A262 Practice E) exposes the material to a boiling copper sulfate-sulfuric acid solution, with any intergranular cracking indicating sensitization that will lead to premature failure in corrosive service. For alloys used in high-temperature steam or caustic environments, stress corrosion cracking (SCC) testing per ASTM G36 or G30 is required to validate resistance to cracking under tensile load in aggressive media.

Microstructural Quality Control for Nickel Alloy Materials

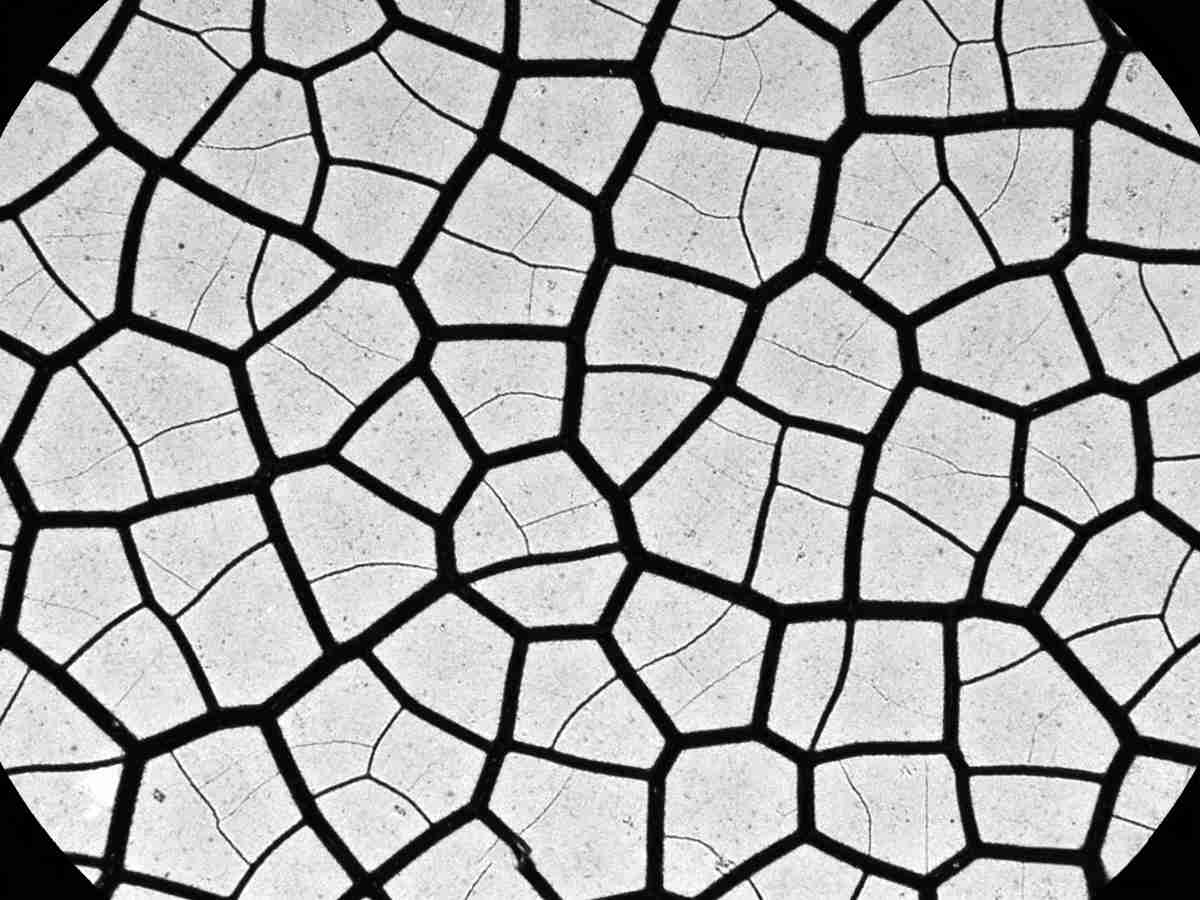

Even with correct chemical composition and heat treatment, microstructural defects such as non-metallic inclusions, segregation, and uneven grain size can compromise the performance of nickel alloy materials. Non-metallic inclusions, primarily sulfides and oxides, act as initiation sites for pitting corrosion and fatigue cracks, particularly in high-stress cyclic applications. Premium nickel alloy materials typically have inclusion ratings of ≤2 per ASTM E45, ensuring minimal defect density and consistent performance.Grain size uniformity is another critical microstructural parameter. Coarse, uneven grain structure can lead to inconsistent mechanical properties, reduced fatigue resistance, and poor formability during fabrication. For most structural nickel alloy applications, a uniform grain size between ASTM 3 and 5 is specified, balancing high-temperature creep resistance (favored by coarser grains) and room-temperature toughness and formability (favored by finer grains).

The long-term reliability of nickel alloy components in extreme industrial environments is entirely dependent on the intrinsic quality and verified performance of the base material. Nominal alloy grade alone is not sufficient to guarantee suitability for your specific operating conditions; every aspect of the material, from chemical composition and heat treatment to microstructure and corrosion resistance, must be validated to meet your application’s unique requirements. When you buy nickel alloy materials, prioritizing verified metallurgical and performance data is the only way to eliminate the risk of premature failure, costly downtime, and safety hazards in service.For application-specific material selection guidance, customized testing protocol development, or detailed metallurgical analysis tailored to your operating conditions, our team of nickel alloy material engineers is available to provide dedicated technical support.

Related Q&A

Q1: What is the most critical test to perform when evaluating nickel alloy materials for chloride-rich offshore applications?

A1: The most critical validation is Critical Pitting Temperature (CPT) testing per ASTM G48 Method C, which quantifies the minimum temperature at which pitting corrosion initiates in a standardized 6% ferric chloride solution. For offshore splash zone and subsea applications, a minimum CPT of 80°C is typically required to resist localized corrosion in high-chloride, cyclic temperature conditions. High-performance alloys such as Alloy 625 and Alloy C276 deliver CPT values exceeding 110°C, making them suitable for the most severe offshore environments.

Q2:How does improper heat treatment degrade the performance of nickel alloy materials?

A2: Improper heat treatment is the leading cause of hidden performance degradation in nickel alloys. For solution-annealed corrosion-resistant grades, insufficient annealing temperature or slow cooling rates allow the formation of brittle intermetallic phases (such as sigma phase) and chromium carbide precipitates along grain boundaries. This reduces both impact toughness (by up to 70% in severe cases) and corrosion resistance, particularly intergranular and pitting corrosion. For precipitation-hardened alloys like Alloy 718, incorrect aging temperatures or hold times prevent the formation of nano-scale γ” and γ’ strengthening phases, resulting in a 40-50% reduction in high-temperature tensile and creep strength.

Q3: What trace element limits are most critical for weldable nickel alloy materials?

A3: For weldable nickel alloys, the most tightly controlled trace elements are carbon, sulfur, and phosphorus. Carbon content must be limited to ≤0.01 wt% for low-carbon grades (e.g., Alloy C276, Alloy 625-LC) to prevent chromium carbide precipitation and sensitization during welding, which causes intergranular corrosion in service. Sulfur is typically limited to ≤0.01 wt% (and ≤0.005 wt% for critical welding applications) to eliminate the formation of low-melting-point nickel-sulfur eutectics, which are the primary cause of weld hot cracking. Phosphorus is limited to ≤0.02 wt% to reduce weld solidification cracking risk and improve overall corrosion resistance.